Abstract

Perspective-aware spatial reasoning involves understanding spatial relationships from specific viewpoints—either egocentric (observer-centered) or allocentric (object-centered). While vision–language models (VLMs) perform well in egocentric settings, their performance deteriorates when reasoning from allocentric viewpoints, where spatial relations must be inferred from the perspective of objects within the scene. In this study, we address this underexplored challenge by introducing Symbolic Projective Layout (SymPL), a framework that reformulates allocentric reasoning into symbolic-layout forms that VLMs inherently handle well. By leveraging four key factors—projection, abstraction, bipartition, and localization—SymPL converts allocentric questions into structured symbolic-layout representations. Extensive experiments demonstrate that this reformulation substantially improves performance in both allocentric and egocentric tasks, enhances robustness under visual illusions and multi-view scenarios, and that each component contributes critically to these gains. These results show that SymPL provides an effective and principled approach for addressing complex perspective-aware spatial reasoning.

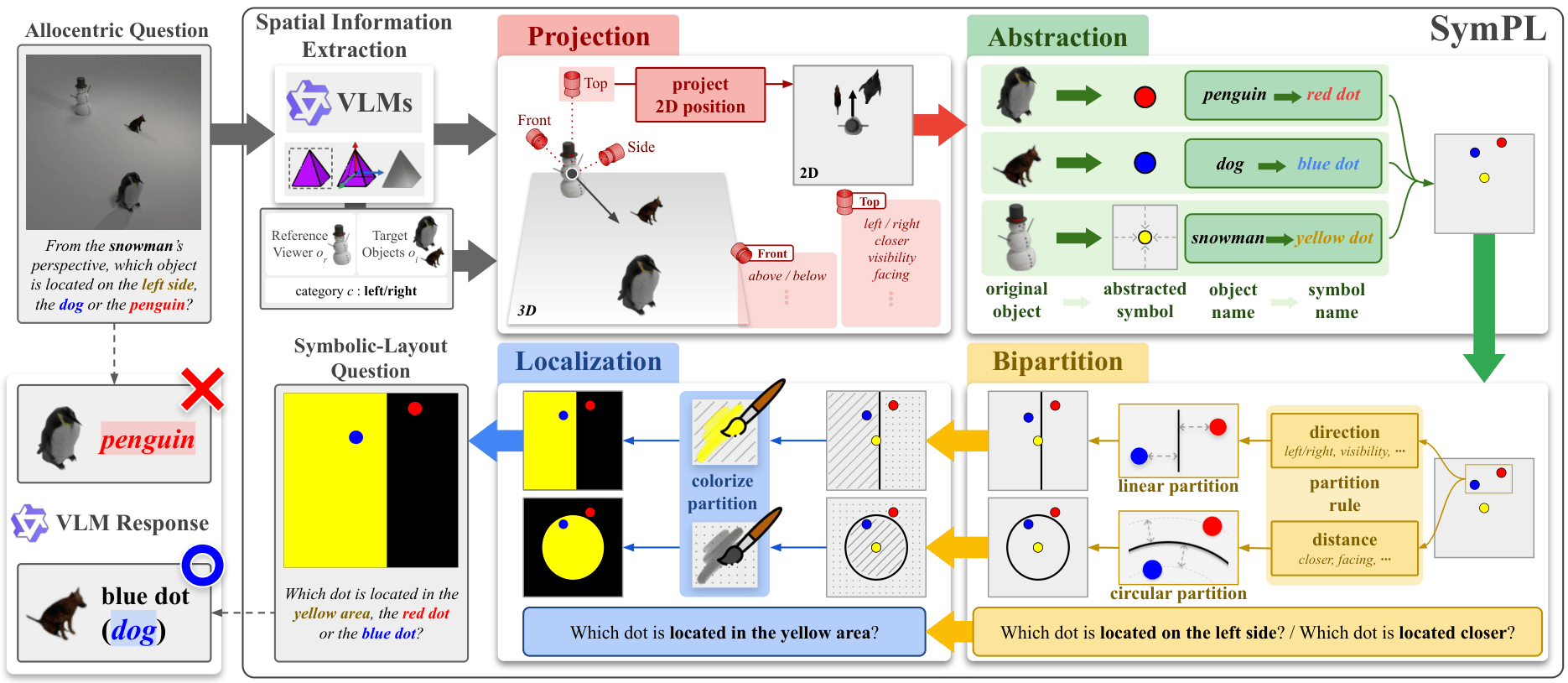

Main Framework

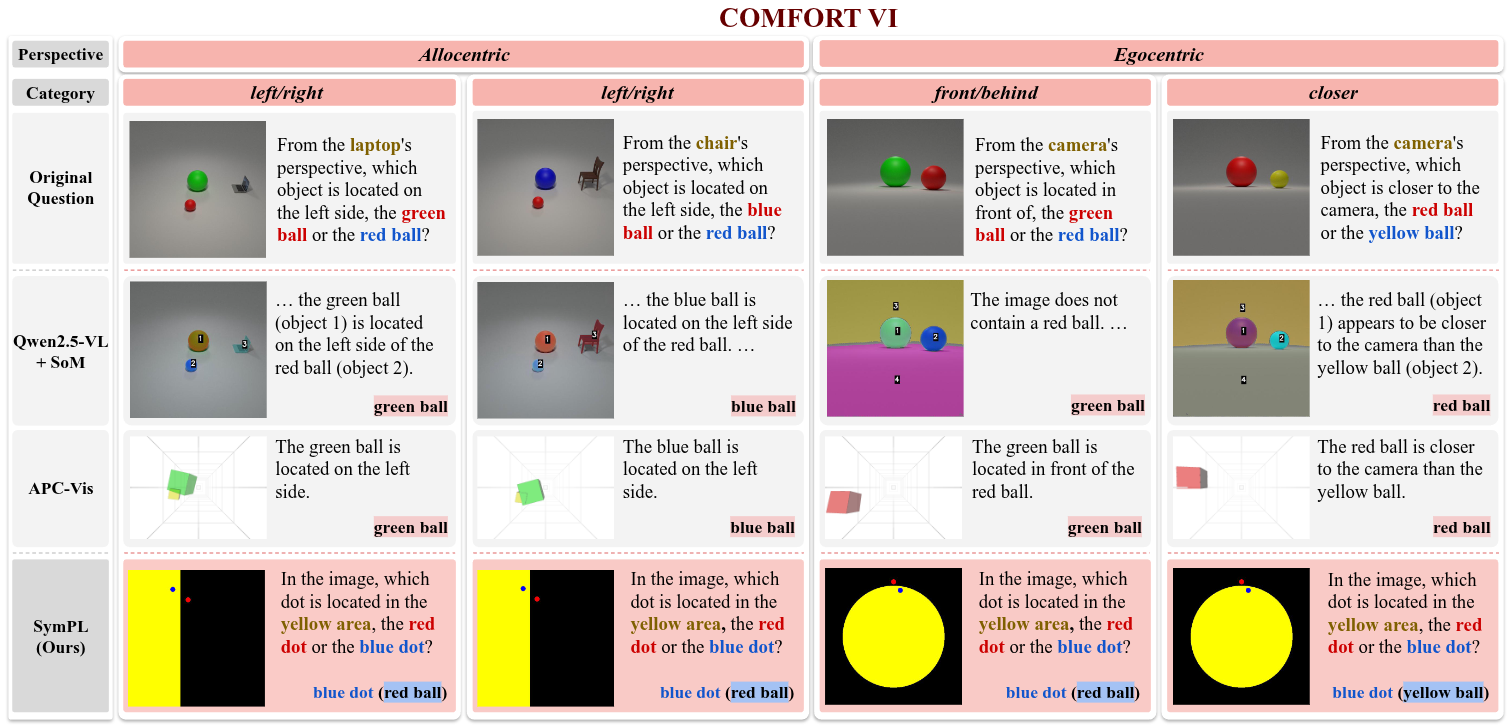

SymPL first extracts structured 3D scene information including object identities, positions, and the reference viewer’s orientation from an allocentric question using VLMs and foundation models. The image is then transformed through projection, abstraction, bipartition, and localization into a symbolic layout question, reformulating allocentric spatial reasoning into a form that VLMs naturally handle well.

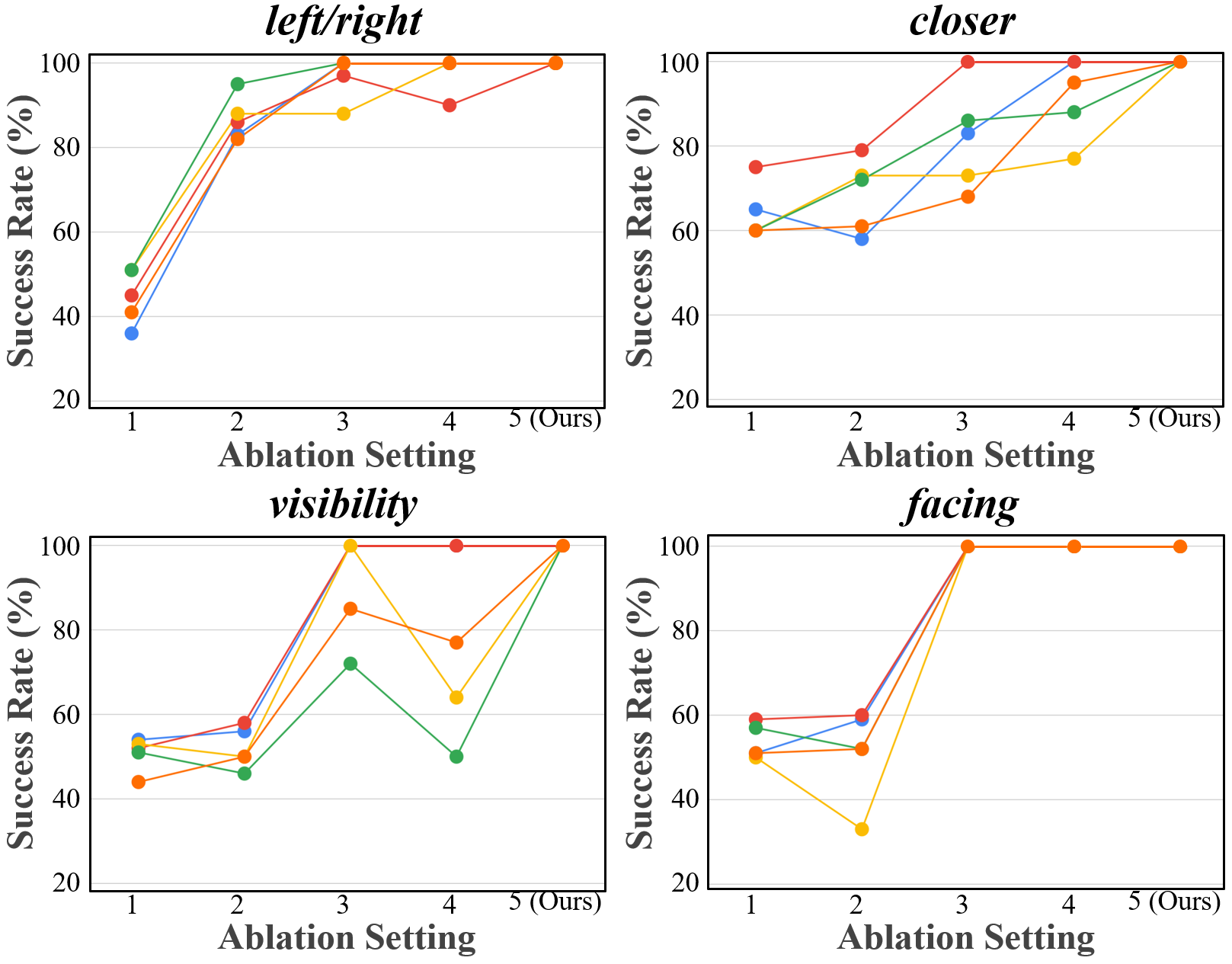

The Effectiveness of Four Factors

We evaluate the contribution of four key factors to performance gains.

To this end, we conducted an ablation study by progressively integrating each of the four factors.

Only the symbolic layout question, incorporating all four factors, achieved 100% accuracy.

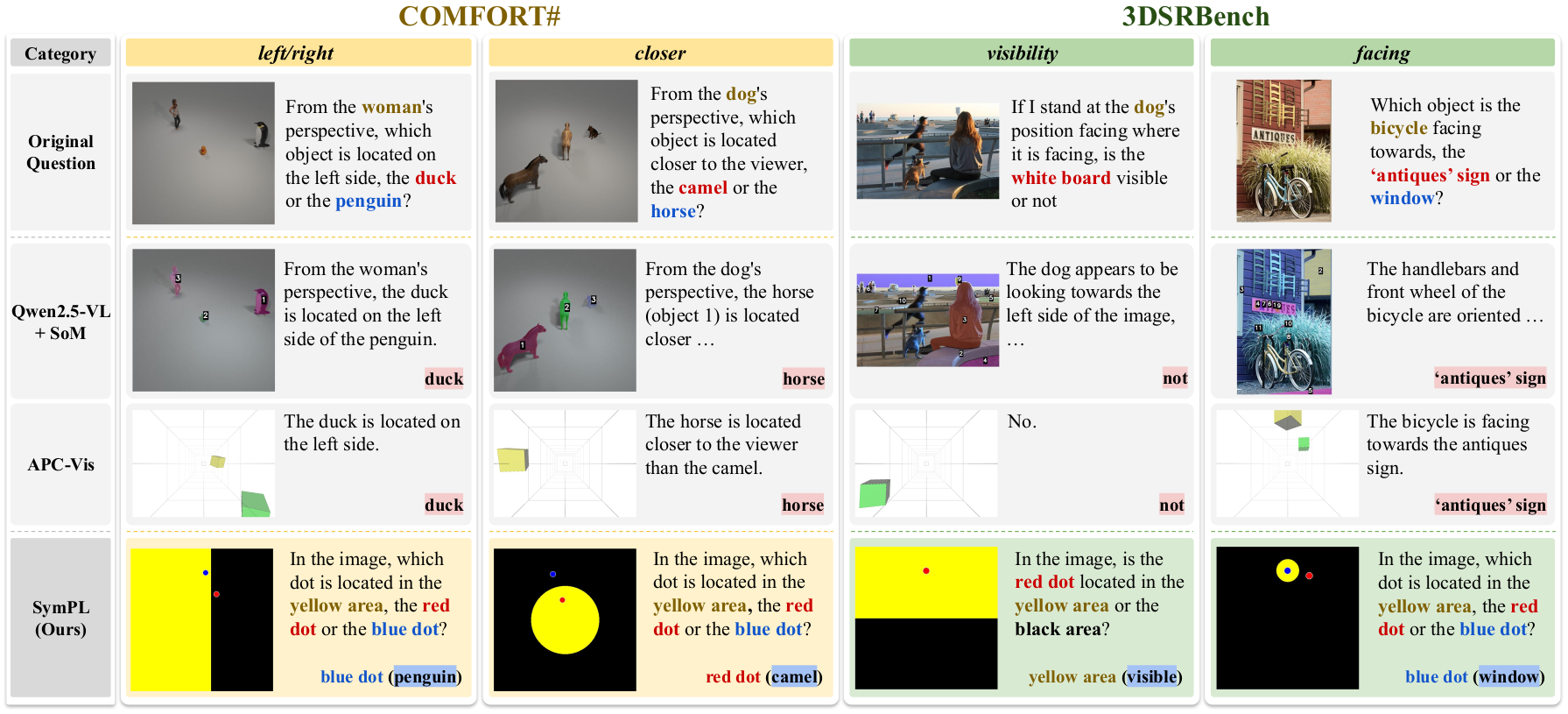

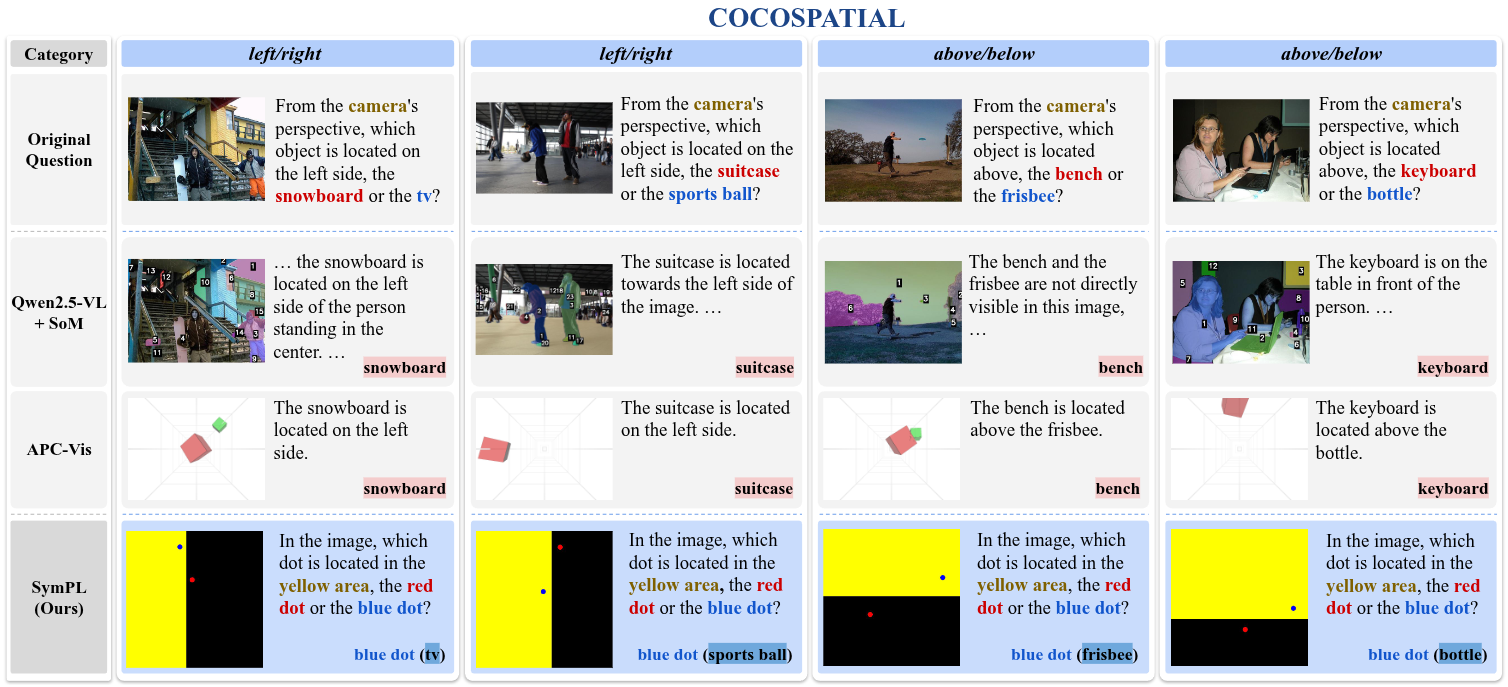

Qualitative Results Across Diverse Benchmarks

BibTeX

@inproceedings{jang2026sympl,

title={Keep it SymPL: Symbolic Projective Layout for Allocentric Spatial Reasoning in Vision-Language Models},

author={Jaeyun Jang and Seunghui Shin and Taeho Park and Hyoseok Hwang},

year={2026},

url={https://arxiv.org/abs/2602.19117}

}